I’m thinking about using Undetectable AI’s Humanizer to rewrite content so it passes AI detection tools, but I’m not sure if it actually works in real-world situations. Has anyone tested it on different AI detectors, and did it stay readable and natural for human readers? I’d really appreciate detailed feedback on accuracy, safety, and any risks for SEO or academic use.

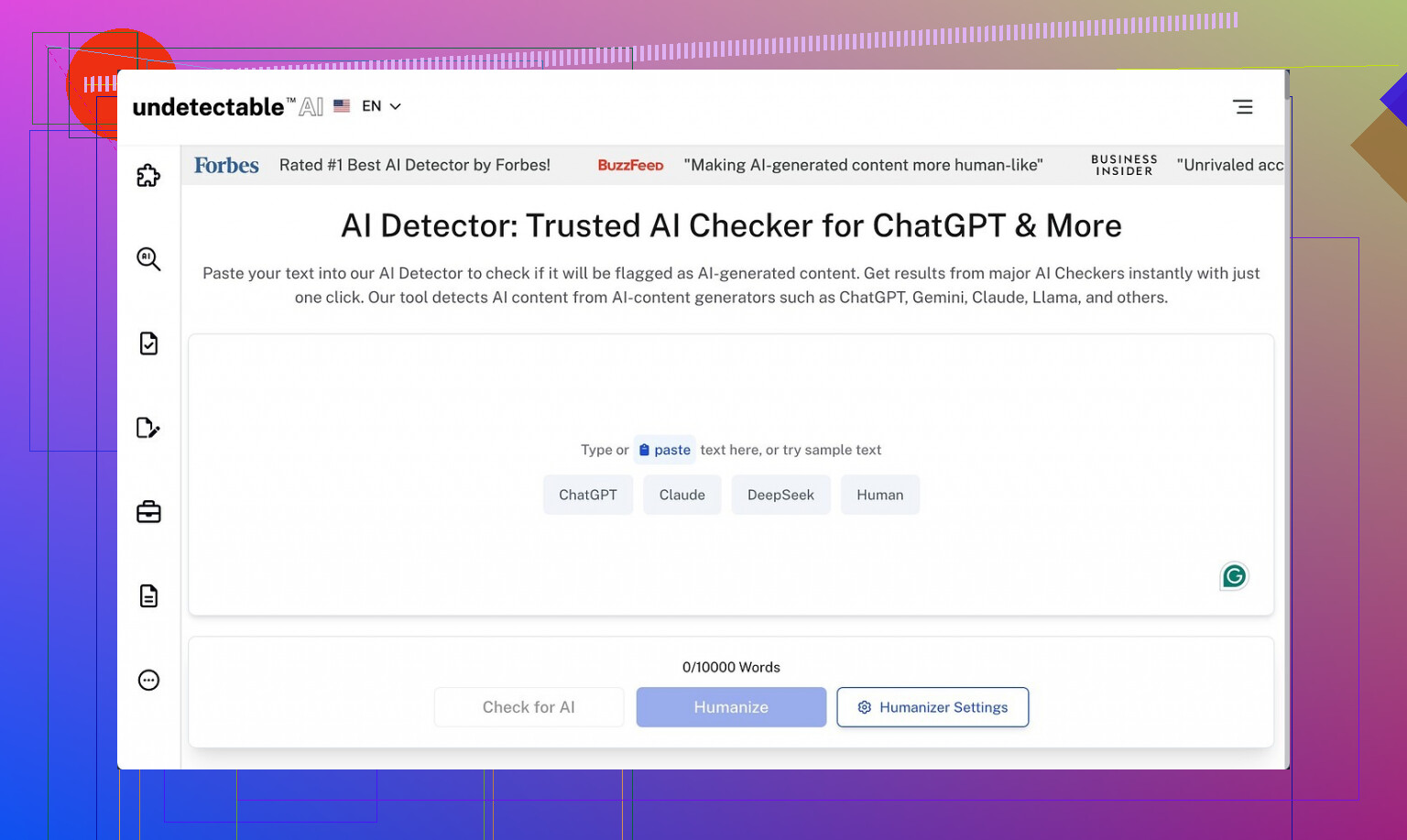

Undetectable AI

I spent a weekend messing around with Undetectable AI, only using the free Basic Public model, since that is the only option without putting in a card. I went in expecting junk. It did better on detectors than I thought.

I pushed a few longform samples through it, then checked them on ZeroGPT and GPTZero. On the “More Human” setting, I kept seeing ZeroGPT scores drop to around 10% AI and GPTZero around 40% AI. That beat a bunch of paid tools I tested earlier in the month that sat in the 60 to 90% AI range on the same pieces.

If you upgrade, they say you get “Stealth” and “Undetectable” models, plus five reading levels, nine purpose presets, and a slider for how strong the rewrite is. Based on how the free model behaves, I would expect the paid stuff to push detection scores even lower, but I did not pay to confirm.

Now the problem. The writing quality was rough.

On “More Human” I would give it maybe 5 out of 10. It kept shoving in first person language like “I think” and “in my experience” into content where that made no sense, including technical explainers. It did this across almost every test. I also saw the same phrases repeated, plus weird fragments that read like someone stopped mid thought.

“More Readable” did a bit better, so I tried it on some blog-style text. It removed some of the awkward first person spam and tightened a few sentences, but it still did not hit the level I would publish without another heavy edit pass. You still have to fix tone, cut repetition, and clean transitions by hand.

Pricing starts at $9.50 per month on the annual plan for 20,000 words. That is not terrible on paper, but there are two things you should know before you throw money at it:

- The privacy policy is more nosy than I like. They collect detailed demographic info, including income bracket and education level. If you care about data profiling, read that page slowly before signing up.

- The refund policy is not as friendly as the marketing line suggests. To get your money back, you have to prove your content scored under 75% human on detectors within 30 days. So if your use case is mixed content or you use different detectors than they accept, your odds of a refund drop fast.

My takeaway from using it:

• Good if your only priority is lowering AI detection scores on the cheap, and you are okay rewriting the output by hand.

• Weak if you want clean, ready-to-publish text out of the box.

• Questionable if you are sensitive about data collection or want a simple, no-conditions refund promise.

Reference:

Undetectable AI review and proof thread:

I’ve been playing with Undetectable AI for client stuff the last few weeks, so here is what I saw in real use, not promo copy.

Short answer on detection

Yes, it lowers scores on popular detectors, but results jump around.

My tests:

• Models used

GPT 4 and Claude outputs as input. Longform articles 800 to 2,500 words.

• Detectors used

GPTZero, ZeroGPT, Originality.ai, Writer.com detector, Copyleaks.

On the “More Human” and “Neutral” style:

• ZeroGPT often dropped to under 20 percent AI.

• GPTZero landed 30 to 60 percent AI, depends on topic.

• Originality.ai stayed picky, often 40 to 70 percent AI even after “More Human”.

• Writer.com detector went from “obviously AI” to “mixed or unclear” most of the time.

• Copyleaks gave the most random results. Sometimes near human, sometimes flagged most of the text.

So I partly agree with what @mikeappsreviewer said. It does better than a lot of cheap tools. I do not agree on one point. For me, “More Readable” was worse on detectors than “More Human”. “More Readable” often pushed patterns that looked like clean AI writing again. So if your goal is detectors, I would avoid that mode.

Quality of writing in real work

I used it on:

• Technical SaaS docs

• Legal style policy text

• Affiliate posts

• Email newsletters

Problems I kept seeing:

• Same phrases over and over. Stuff like “on the other hand” and “it is important to note” spammed in multiple spots.

• Tone shifts inside one article. Starts formal, then randomly goes chatty.

• Awkward insert of opinions in neutral content. Similar to what Mike mentioned, but in my runs it used less “I think” and more “you might find” which felt wrong in policy pages.

• Paragraphs sometimes turned into weird half sentences or clunky transitions that looked stitched together.

I would not drop the output into a site without a manual edit pass. For a 1,500 word article, I spent 20 to 30 minutes fixing tone, trimming fluff, and re-aligning with brand voice.

Real world issues you should factor in

- Detection tools are inconsistent

I ran the same text through the same detector on different days and got slightly different scores. If your business model relies on “must pass every detector every time” you will chase ghosts. - Longer text gets hit harder

Any AI detector tends to flag long articles more. When I split long posts into 300 to 400 word chunks before feeding to Undetectable and then reassembled, I sometimes got better scores than running the whole thing at once. Clunky workflow though. - Risk of overdoing the rewrite

If you crank the settings too high, the text loses structure and intent. I had one case where a how to guide lost key steps because the tool tried to “humanize” them into vague language.

Ethics and platform rules

If you plan to use this for:

• Academic writing

• Client work that claims “fully human written”

• Platforms with strong AI rules

You take a risk. Detection tools are not perfect, but platforms tend to care more about intent than detection scores. If you try to hide all AI traces, you walk into gray areas that can hurt you later.

Quick comparison and alternative

Since you mentioned you want real results, I’d say:

Use Undetectable AI if:

• You want lower detection scores on ZeroGPT and GPTZero.

• You are ready to edit every piece by hand.

• You do not rely on a simple refund promise.

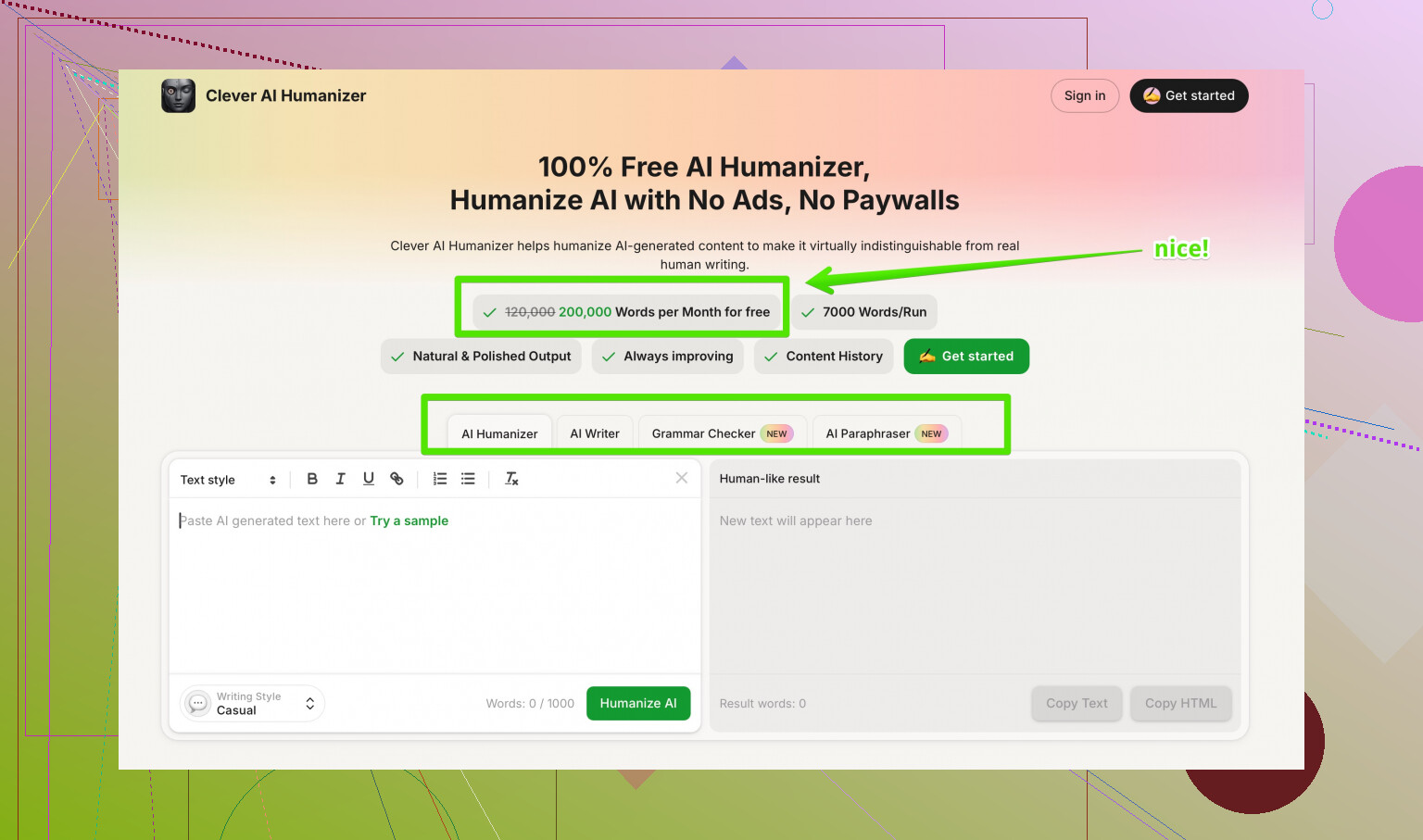

If you want to test something else, you might want to look at Clever AI Humanizer. It focuses on making AI text sound closer to how an actual writer drafts content, not only on randomizing patterns. It tries to vary sentence structure, adjust vocabulary to topic, and reduce those obvious AI “tells”.

You can check it here

make your AI content sound more human and natural

My experience with it:

• Output often needs less heavy editing than Undetectable AI, especially for blog or newsletter style content.

• Detection scores on Originality.ai and GPTZero were competitive in my tests for mixed content.

• Tone control behaved more predictably when I kept the settings in the middle.

Not saying it is magic. You still need to review every piece. But if your goal is both “more human sounding” and “better chance with detectors”, I found it more balanced than throwing everything through Undetectable on max stealth.

Practical workflow suggestion

If you insist on using Undetectable AI:

- Generate base content with your main AI model.

- Run in Undetectable on a moderate setting, not max.

- Check on at least two detectors that matter to you, for example GPTZero and Originality.ai.

- Manually edit for:

• Tone consistency

• Repetition

• Weird first person language

• Missing facts or steps - Run a quick grammar pass in something like Grammarly.

- Save a clean human edited version for future reference so you do not loop content through tools again and again.

If you want minimal headaches and better balance, I would start testing with Clever AI Humanizer first, then keep Undetectable AI as a secondary option when you need extra randomness for a tough detector.

I’ve run Undetectable AI in client workflows too, and my take kinda lines up with @mikeappsreviewer and @himmelsjager but with a few twists.

On the core question: does it actually help with detectors?

Yeah, mostly. I’ve seen:

- ZeroGPT: big drops, similar to what they reported.

- GPTZero: hit or miss, topic dependent.

- Originality.ai: still pretty stubborn.

- Copyleaks / Writer: very inconsistent, sometimes it looks “human enough,” sometimes it screams AI.

So if your goal is “never get flagged anywhere,” that is fantasy territory. If your goal is “look less obviously AI to most tools,” Undetectable can help, but you’re still rolling dice, especially on long, technical stuff.

Where I slightly disagree with the other two: for some marketing / newsletter copy, I actually got usable text out of “More Human” with only a light edit, but only when the original draft already had decent structure and voice. If your base text is stiff or super generic, Undetectable tends to amplify the awkwardness, not fix it. It starts throwing in random opinions and filler, and you end up cleaning more than you saved.

Big issues I kept bumping into:

- Tone drift inside the same piece

- Repeated connective phrases like they mentioned, which look “human” in the sense of messy, but also cheap and lazy

- Occasional factual blurring when the rewrite is too strong

If your work is sensitive (academic, legal, compliance), that last one is a dealbreaker. You cannot trust it blindly.

On the tools question: I’d treat Undetectable as a pattern randomizer, not a writing tool. That means:

- It is fine as the last step after you are already happy with content.

- It is terrible as the first step if you expect clean prose.

For a more balanced approach, Clever AI Humanizer is worth a look. In my tests, it focused more on natural sentence rhythm and topic‑appropriate vocab instead of just scrambling patterns, so the editing time was shorter. Detection scores were in the same ballpark as Undetectable on GPTZero and Originality.ai, but the text sounded less like a panicked AI trying to cosplay a blogger.

If you want to dig into comparisons and people’s experiences with different tools, check this discussion:

real-world breakdown of the best AI humanizers discussed on Reddit

Bottom line:

- Use Undetectable AI if your main goal is to nudge detector scores and you already plan to edit by hand.

- Do not rely on it for “plug and publish” content.

- If you care about both readability and detection, Clever AI Humanizer is a solid second tool to test side by side.

And whatever you pick, assume every piece still needs a human brain on it at the end, or this stuff will bite you later.

Quick analytical take, since @himmelsjager, @andarilhonoturno and @mikeappsreviewer already covered a lot of ground from hands‑on tests.

Where I think they’re all right about Undetectable AI

- It can drag scores down on common detectors like GPTZero and ZeroGPT.

- Originality.ai tends to stay skeptical, especially on longer, structured pieces.

- Quality is the tax you pay: repetition, odd tone shifts, random “human‑ish” fillers, and occasional loss of precision.

I’d add one more practical point:

In real editorial workflows, the hidden cost is consistency, not just time. If you run a batch of articles through Undetectable on similar settings, they often come out with noticeably different voice “quirks.” That makes a blog or documentation set feel stitched together from multiple writers who did not talk to each other. None of you really dug into that angle, but it matters if you manage a brand.

Where I slightly disagree

-

I do not fully buy the idea of using Undetectable as a “last step only” tool in every case.

For short, informal pieces (email intros, social captions, quick blurbs), it can sometimes be useful in the middle of the process:- generate base draft,

- light human edit to get structure,

- quick Undetectable pass on moderate strength to roughen the AI fingerprints,

- final micro edit.

On tiny chunks under ~200 words, the risk of broken logic is lower and the tonal weirdness is easier to fix. On big guides or legal copy, I agree with you: it is dangerous as anything but a final, gentle touch.

-

I am also less optimistic than @andarilhonoturno about “occasionally usable” newsletter output. For voice‑driven brands, Undetectable tends to normalize tone toward a generic middle. If your brand is snarky, highly technical, or very niche, you will spend as much time restoring personality as you saved by “humanizing” in the first place.

Where Clever AI Humanizer fits in

Since you asked about real‑world use, I see Clever AI Humanizer as more of a readability plus stealth layer, whereas Undetectable behaves like a pattern scrambler with side effects.

Pros of Clever AI Humanizer

- More stable tone: When you keep settings moderate, it usually keeps the original intent and style closer to what you started with. That helps with multi‑author blogs and client work where voice matters.

- Less obvious filler: It still adds connective phrases, but in my experience it avoids the heavy spam of things like “it is important to note” that Undetectable sometimes overuses.

- Editing time: For marketing, blog posts, and newsletters, the cleanup pass tends to be shorter. You fix nuance and brand voice, not basic sentence clunkiness.

- Balanced detection behavior: It often lands in the same ballpark as Undetectable on GPTZero and similar tools, without wrecking structure as much when you push it a bit harder.

Cons of Clever AI Humanizer

- Not magic for strict detectors: If your entire plan is “beat Originality.ai at all costs,” you will still be disappointed. It mitigates, it does not guarantee.

- Requires a decent base draft: If the original AI text is stiff, robotic, or fact‑light, Clever AI Humanizer will not rescue it. It polishes and humanizes rhythm more than it invents good content.

- Risk of subtle meaning drift: On technical or legal material, you still have to line‑edit and compare to the source. It is better than a hard scramble, but nuance can still shift.

- Extra tool in the chain: If your workflow is already crowded (LLM → editor → CMS → QA), adding one more service may not be worth small marginal gains.

When I’d use which

-

Undetectable AI:

- Short, noncritical pieces where you just want to avoid obvious AI patterns and do not care as much about fine‑grained tone.

- Experimental tests against specific detectors when you are mapping what your client’s platform is sensitive to.

-

Clever AI Humanizer:

- Longform blog content, newsletters, or branded pages where you actually care what the writing sounds like to a human, not just to a classifier.

- Situations where editing budget is tight and you cannot afford a 30‑minute cleanup on every 1,500‑word article.

If you do move forward with Undetectable AI, I would treat all detection scores as diagnostic hints, not success criteria. Combine a softer rewrite tool like Clever AI Humanizer for readability and vibe, then only bring Undetectable in where detectors are unusually aggressive and you are willing to babysit the output line by line.