I’m concerned about submitting content created with AI tools and whether it will be flagged as plagiarized by popular plagiarism checkers. I’m looking for advice from anyone who has experience with this, and want to understand how AI writing is detected or treated by these systems.

Short answer: Yes, there’s potential for AI-generated content to be flagged by plagiarism checkers, but it much depends on how the AI tool works, how unique the output is, and what kind of checker you use. Most AI tools (like ChatGPT and others) generate text that isn’t copied verbatim from the web, so in theory, it’s “original”. But here’s the catch: lots of AI-written content can be eerily similar when the prompt is basic or overused, so you could get a “false positive” on platforms like Turnitin or Grammarly.

If the AI is spitting out commonly used phrases, boilerplate intros, or straight-up copying parts it’s seen online, plagiarism checkers might flag it. Some tools are now better at detecting “AI style” text—meaning even if your words aren’t technically plagiarized, they might still get flagged for being “suspiciously machine-like” (lol, yes, that’s a thing now).

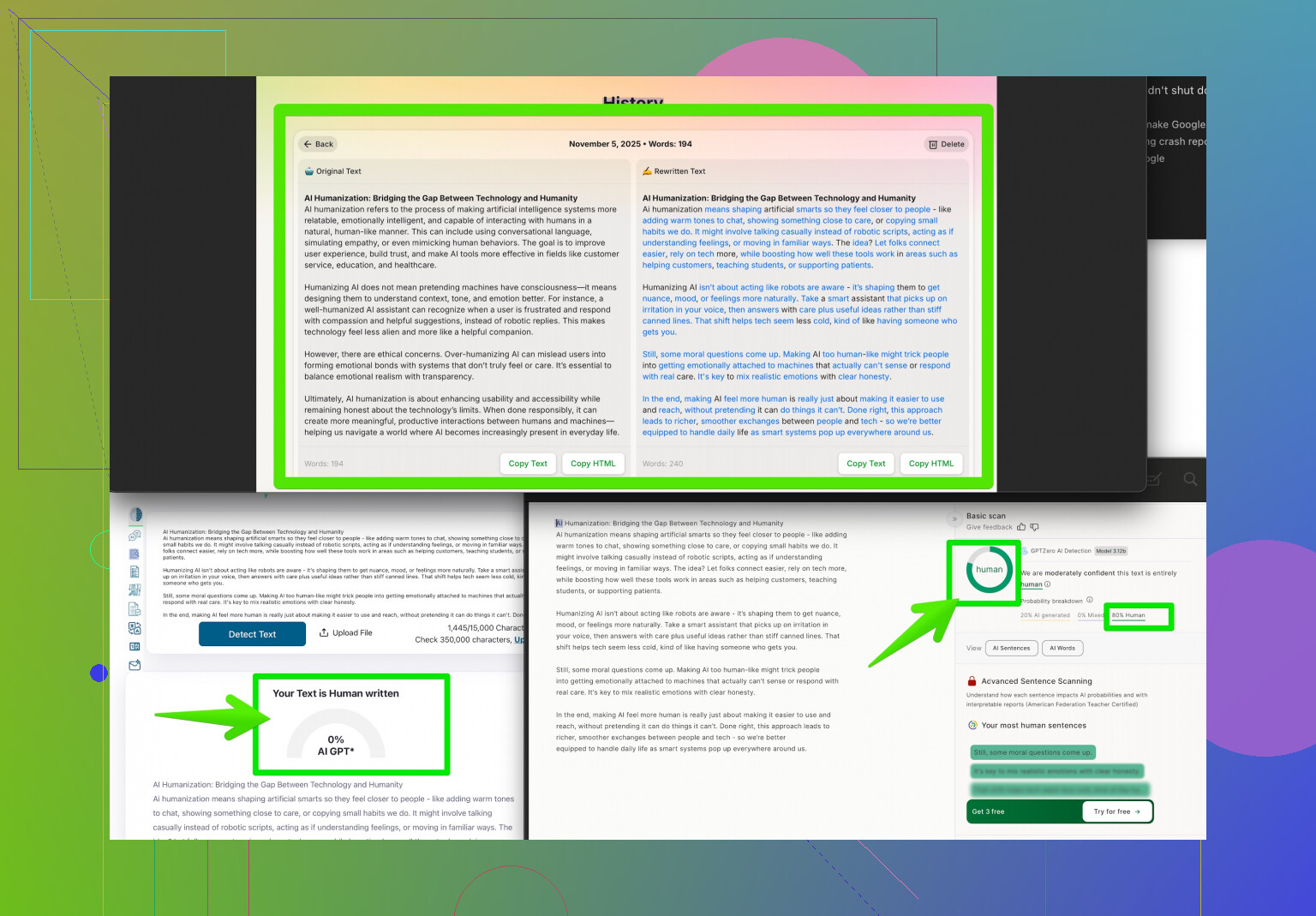

Pro tip: Run your AI-created content through multiple plagiarism checkers before submitting, especially for high-stakes or academic stuff. To help make your content sound more authentic and reduce chances of getting flagged, tools like the Clever AI Humanizer can really come in handy in making your text read and feel more human. If you want to check it out, see what boosting your writing authenticity looks like. Basically, always edit and add your own voice, facts, and perspective—don’t just copy-paste what the AI gives you.

Bottom line: AI content usually passes most plagiarism checks, but there’s nuance. It can still be risky if you don’t double-check or humanize the output!

Honestly, this topic is just a minefield lately. I’ve played around a LOT with AI content for work and school, and what most people miss is just how inconsistent the checking tools can be—not just flagging “plagiarism” but sometimes totally misfiring for weird patterns or common phrases that tons of people (and bots) use. @cacadordeestrelas made some solid points about AI outputs being a little too generic or “robotic” and that’s dead-on, but I’ll push back slightly: Sometimes content generated by AI is sneakily close to phrasing used all over the internet, even if not an outright copy-paste.

A cool trick I’ve learned: Even if AI writes “new” stuff, those checkers (esp. Turnitin) LOVE to flag stuff if there are too many consecutive words matching anything in their database. So, repetitive AI filler like “In conclusion, it is important to note…” or “Advancements in technology have greatly impacted society…” gets instantly flagged. Seriously, watch out for those.

Instead of just running your stuff through more checkers, try actively rewriting, changing sentence structure, and dropping in more of your own references or wacky phrasing—stuff no AI would ever say. But tbh, tools like Clever AI Humanizer can help if you want that smoother, less robotic result, especially when you’re in a hurry.

One thing I don’t agree with is that “AI content usually passes most checks.” I’ve had original-seeming stuff get flagged way too often, and if your submission is important, that’s a headache you do NOT want. If your end game is authenticity, always double down on your voice.

BTW, if you want the inside scoop on how people are actually humanizing AI content, check out what Reddit users are saying for some brutally honest tips—I’m linking to a thread that’s all about making your AI-generated writing sound human. Tons of little tricks folks have discovered.

Final word: Relying on AI alone is kind of playing with fire. Get creative, mix up your language, and treat AI as your helper, not your ghostwriter. You don’t want to be that person explaining yourself to a prof or boss… again.

Let’s break this down like a troubleshooting checklist for writers dealing with AI and plagiarism checkers:

-

AI Plagiarism Reality Check

Not gonna sugarcoat it: if you use basic AI prompts or leave language untouched, you’re at the mercy of pattern-detecting bots like Turnitin. @viajantedoceu and @cacadordeestrelas both hit on how AI sometimes “creates” text by recombining common phrasing—it isn’t literally plagiarized, but algorithms catch repeated chunks. You’ll see flags for stuff like “technology has changed the world,” and it’s maddening. -

Beyond Boilerplate

Personally, I’ve noticed originality isn’t just about raw phrasing. AI has a knack for creating vanilla text devoid of specific context or insight—something checkers increasingly flag as “AI-generated.” This isn’t strictly plagiarism, more like a quality filter. -

Clever AI Humanizer: Pros & Cons

- Pros: It’s purpose-built to make AI output sound human, smoothing syntax and mixing up sentence patterns. This reduces both AI detector AND plagiarism hits, saving time if you aren’t up for extensive manual edits.

- Cons: Occasionally, you lose nuance or technical precision. The tool’s rewording isn’t perfect for complex, specialized topics—you still need human QA for subject matter accuracy.

-

Competitors’ Angle

Those other takes focus mainly on plagiarism avoidance and reducing mechanical tone, but there’s also another dimension: some alternatives (“AI detectors”) are getting aggressive. Focusing only on plagiarism misses the risk of “AI detection” flags altogether. -

Pro Troubleshooter Moves

- After running through a humanizer like Clever AI Humanizer, break up sentences, add niche references, and rewrite intros/conclusions.

- Avoid being too predictable—insert your own stories or perspectives.

- Run your work through the actual checker your institution uses; not all detectors are created equal.

Long story short: don’t just trust raw AI. Use tools like Clever AI Humanizer for the rewrite, but don’t skip the old-fashioned “add your own spin” approach. Skimping on that is how people get stuck explaining false positives.