I used GPTHuman AI Review for feedback on my content, but the insights were confusing and didn’t match what I expected. I’m trying to understand how accurate this tool really is, what its limitations are, and how others are using it effectively. Can anyone explain how to interpret its results and share tips to get more reliable, actionable reviews?

GPTHuman AI review from someone who spent too long testing this thing

GPTHuman AI Review

I tried GPTHuman because of the line on their page about being “the only AI humanizer that bypasses all premium AI detectors.” That line pulled me in. The tool did not hold up under testing.

If you want the original test thread, it is here:

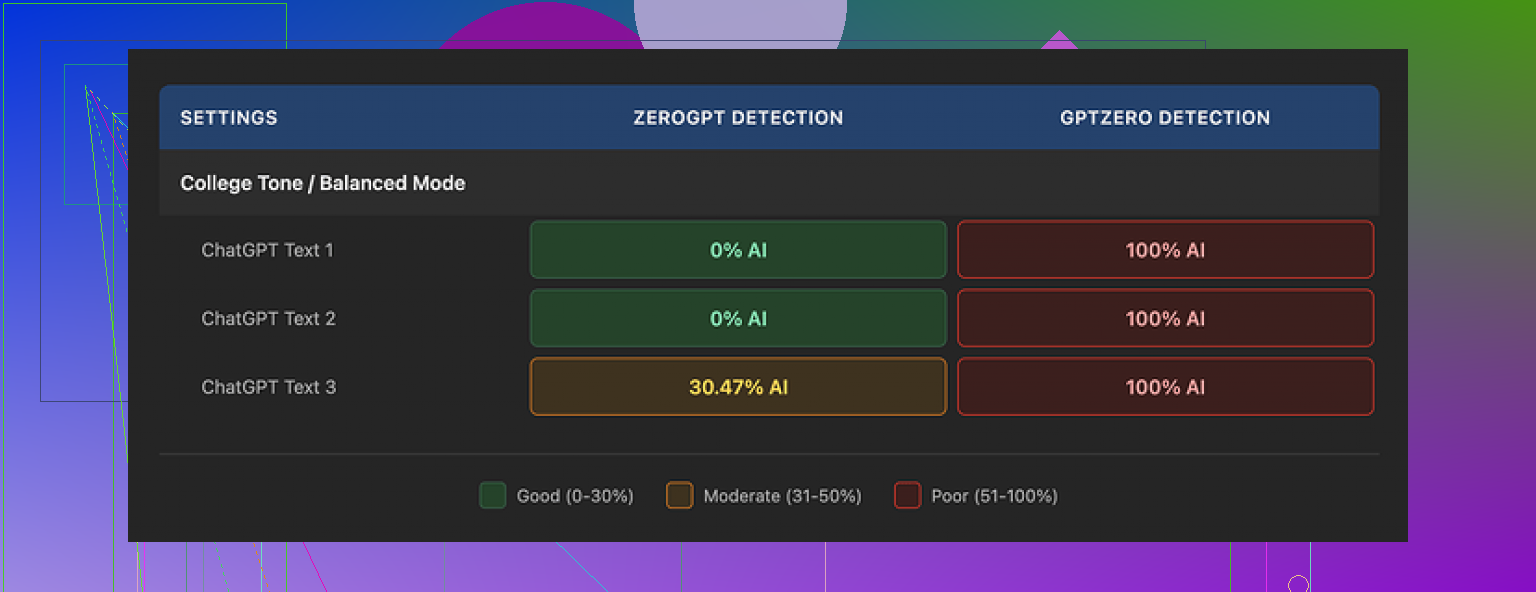

I ran three different samples through GPTHuman, then checked them with external detectors:

• GPTZero marked every single GPTHuman output as 100% AI. All three. No wobble, straight 100%.

• ZeroGPT was a bit looser. Two outputs came back at 0% AI, one landed in the ~30% AI range.

GPTHuman itself shows a built-in “human score” for each output. Those numbers looked great, high passing rates, but they did not line up with what GPTZero or ZeroGPT reported. If you trust external tools for client work or school stuff, the internal “human score” here feels misleading.

Second problem, and it is not small. The writing quality.

Across my tests I saw:

• Subject verb mismatches

• Sentence fragments where the thought cut off halfway

• Word swaps that broke meaning, like synonyms dropped in the wrong context

• Endings that read like the text generator forgot what the topic was

To be fair, the structure looked fine at a glance. Paragraphs were spaced, nothing was mashed together, and it did not look like a wall of text. But if you read line by line, the grammar issues stack up fast. I would not send those outputs to a client or a professor without heavy editing.

Now the part that annoyed me more than the writing.

The free plan

The “free” tier gave me up to 300 words total. Not 300 words per run. Three hundred words across everything. After that, hard wall.

To finish my usual benchmark set, I had to make three new Gmail accounts. That alone told me enough about how they see “free access.”

Paid plans

Their pricing when I checked:

• Starter: from $8.25 per month if billed yearly

• Unlimited: $26 per month

The “Unlimited” label is a bit misleading. You get unlimited runs, but each output is capped. Max 2,000 words per run, even on the top plan.

So if you work with long reports, ebooks, or big blog posts, you will have to:

• Split the text into chunks

• Run each chunk

• Re-stitch and then fix the style breaks and grammar by hand

Policy stuff you need to know before you pay

Reading their terms made me pause more than once:

• All payments are non-refundable. If you pay and hate it, the money does not come back.

• Your submitted content is used for AI training by default. There is an opt-out, but you need to go out of your way to use it.

• They state they can use your company name in their marketing unless you explicitly tell them not to.

So if you work with sensitive material, client docs, or anything under NDA, this is not a casual “paste it and go” tool. You need to decide if you are ok with training use, and you should probably email them about the company name bit if you pay from a business account.

Comparison with other “humanizers”

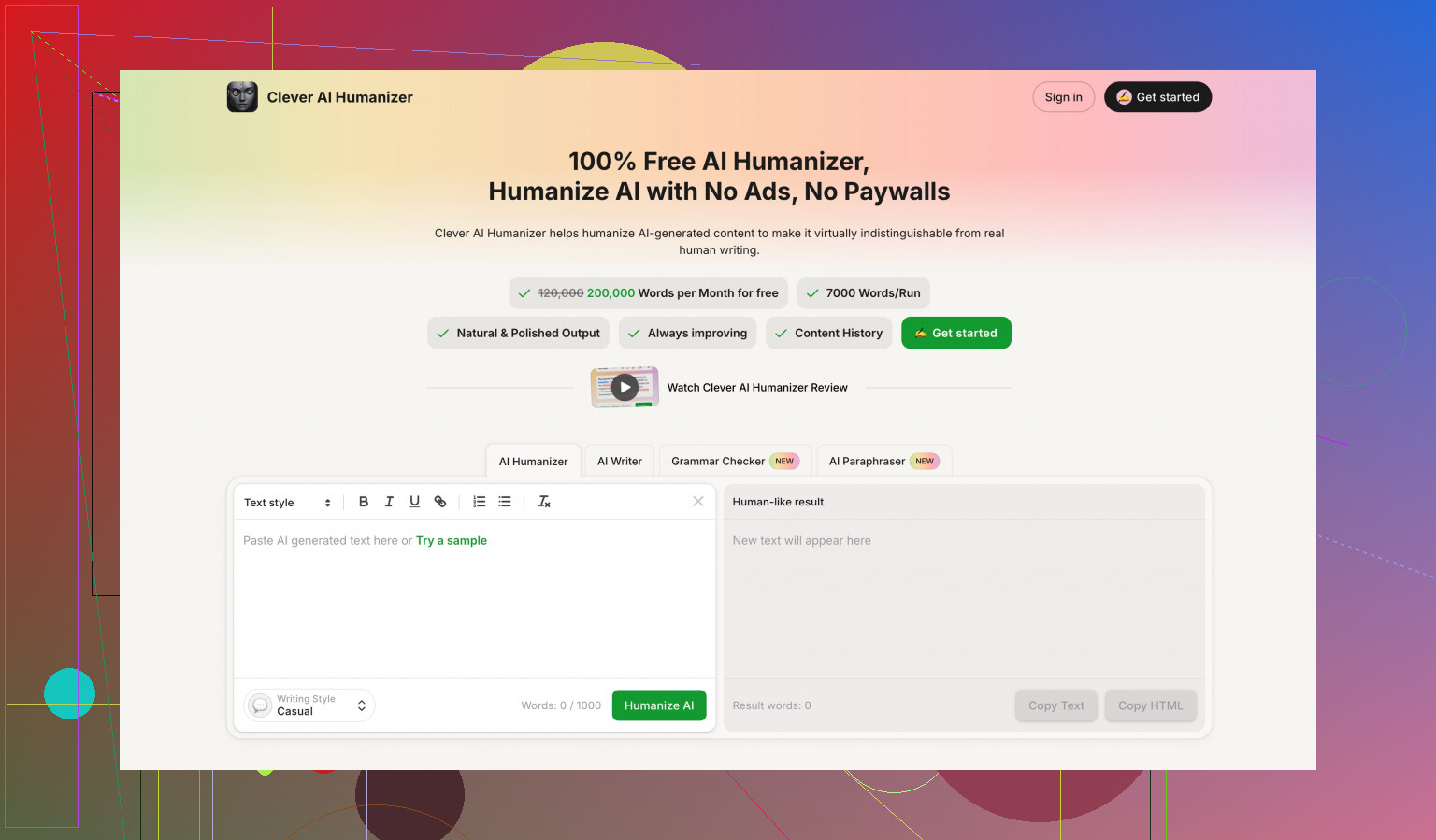

While testing different tools, I ran the same benchmark batch through multiple services. On my side, Clever AI Humanizer did better in two areas:

• Detector scores were stronger and more consistent

• Access was easier, since it was fully free at the time

Reference link again:

If your goal is to get past detectors with minimal cleanup, GPTHuman did not meet that mark in my runs. You spend time fixing grammar, you hit low free limits, and you accept tough terms.

If you still want to try it, I would suggest:

• Start on a throwaway sample, not client work

• Check outputs against GPTZero and ZeroGPT, not only their internal “human score”

• Read every line out loud for grammar issues before sending it anywhere formal

• Decide upfront how you feel about your text being used for training and about the no-refund policy

That was my experience after multiple tests and three burner Gmail accounts.

Short answer on accuracy: treat GPTHuman as a noisy opinion, not a reliable judge of “humanness” or quality.

What you are seeing with confusing insights matches what others reported. @mikeappsreviewer focused on detectors and grammar. I agree with most of that, but I think the bigger issue for your use case is feedback quality, not detection scores.

Here is how I would think about it.

- What GPTHuman is good for

• Quick surface tweaks to make AI text look less uniform.

• Breaking up long, flat paragraphs.

• Adding some variation in sentence length and word choice.

If you feed in very “plain GPT” content, it often comes back with more noise, which detector tools sometimes interpret as “human-ish”. That does not mean the text is better.

- Where it falls apart for feedback

You used it for feedback on your content. That is the weak spot.

Common issues people run into:

• Vague comments like “add more detail” with no clear target.

• Conflicting advice from one run to the next.

• It “hallucinates” problems, for example, calling a clear sentence “unclear” or misreading your intent.

So if you expect editor-level guidance, it will feel random and confusing.

- Accuracy vs expectations

If your expectation is:

• “Tell me if this sounds human to a detector.”

Accuracy is low, since external tools like GPTZero often disagree with GPTHuman’s “human score”.

• “Give me useful editorial feedback.”

Accuracy is mixed. It catches some basic phrasing issues, but misses structure, audience fit, and logic flow.

Treat its feedback like a noisy Grammarly comment. You review, not obey.

- Main limitations

From what you and others report, plus what I have seen:

• Detection mismatch

Internal “human score” does not line up with GPTZero, ZeroGPT, etc. If your school or client relies on those, trusting GPTHuman’s internal score is risky.

• Style and coherence

Text can look more “messy” in a human way, but logic sometimes breaks. You get:

– Wrong word substitutions.

– Broken chains of thought.

– Tone shifts between paragraphs.

• Word limits and pricing

Short runs, capped outputs, tight free allowance. This hurts if you want full-document feedback or large reports.

• Data and policy concerns

Content used for training by default, non-refundable payments, and marketing clauses. If you handle client docs, that is a big red flag.

-

How others use it without going nuts

Patterns I see that work better:

• Use GPTHuman on short sections only, 300 to 800 words, not whole documents.

• Use it for “style roughening”, then do your own edit for clarity and logic.

• Never trust one tool. Always cross check with:

– At least one external detector if detection matters.

– A grammar checker like LanguageTool or Grammarly. -

What to do with confusing feedback

Here is a practical way to make sense of its comments:

Step 1: Is the comment specific?

“Make this more clear” is useless. “Explain why X matters for Y” is useful. Ignore comments without a concrete ask.

Step 2: Check against your goal and audience.

If you write for experts, some AI feedback will push you to oversimplify. If you write for beginners, it might keep jargon you do not need. Keep your goal as the judge, not the tool.

Step 3: Cross check one paragraph with another tool.

Take one confusing section. Run it through something like Grammarly for clarity and through a human friend or peer if possible. If two or more sources disagree with GPTHuman, discard its advice on that point.

- If your main goal is detection evasion

If you only care about getting flagged less by detectors, GPTHuman looks inconsistent. That matches what @mikeappsreviewer showed with GPTZero marking outputs as 100 percent AI.

In that specific use case, tools like Clever Ai Humanizer tend to aim more directly at detector patterns. You still need to check with external tools, but they often keep the grammar more stable and the text closer to your original intent.

I do not think any “humanizer” is magic. Detectors change, models change, and no one stays perfectly aligned forever. So treat all these tools as tactical helpers, not a finish line.

- How I would use GPTHuman, given all this

If you already paid or want to keep trying it:

• Use it as a first-pass style roughener on short pieces only.

• Ignore the built in “human score”. Use GPTZero or ZeroGPT for detection checks if you care about that.

• Run your final text through a grammar checker.

• For important work, get at least one human review.

If you want more consistent, SEO friendly and human style outputs without wrecking meaning, then something like Clever Ai Humanizer plus manual editing is usually safer than relying on GPTHuman alone.

So, accuracy is mixed, feedback is spotty, and you need your own filter. Treat it as an opinionated tool, not an authority.

You’re not crazy, GPTHuman is kinda all over the place.

I tried it for feedback too, not just “humanizing,” and that’s where it really falls apart. What you’re calling “confusing insights” is basically the tool trying to play editor with a weak understanding of context.

Here’s how it looked from my side:

- Accuracy of the feedback

It’s decent at spotting very surface-level stuff:

- “This sentence is long”

- “You repeat this word a lot”

But once it tries to talk about clarity, tone, or structure, it drifts. I’d get comments like: - Calling a clear, simple sentence “unclear”

- Telling me to “add examples” when there were literally examples in the same paragraph

- Suggesting tone changes that would clash with my audience

So in terms of editor-level accuracy, I’d put it at “rough suggestion generator,” not “trustworthy reviewer.”

- Why the insights feel off

It’s not actually reading your intent. It’s pattern-matching: “this type of sentence usually gets this type of comment.” That’s why:

- Sometimes it contradicts itself between runs

- It will praise a section in one pass and criticize basically the same thing in another

- It gives you generic advice that doesn’t map to your specific goal

This is where I slightly disagree with @espritlibre. They framed it like a noisy Grammarly. To me it’s worse than that, because Grammarly at least tends to stick to grammar and clarity. GPTHuman tries to act like a writing coach and ends up hallucinating problems that aren’t there.

- Limitations that really matter for feedback use

Not repeating what @mikeappsreviewer already broke down on detectors and terms, so focusing just on feedback:

- It does not track your thesis or core point. It may say “restate your main idea here” even when you literally just did.

- It has no real sense of audience. It’ll happily tell you to “simplify” specialist content that actually needs jargon, or tell you to “add depth” to something that’s meant to be skimmable.

- It can push you into overediting. You fix one thing it complains about, then it complains about the fix.

If you follow all of its suggestions, your writing can end up more bloated and less confident.

- How other people are handling it

From what I’ve seen in threads like the ones from @mikeappsreviewer and @espritlibre, plus my own mess:

- They ignore the “human score” completely. It’s basically vibes.

- They use it on small pieces, not whole drafts.

- They treat the feedback as optional brainstorming, not rules.

I’ll add one tweak to that: I found it more useful to only ask it for specific things, like “improve transitions between these two paragraphs,” instead of generic “give feedback on my content.” Generic prompts seem to trigger the vague, conflicting suggestions.

- If your main goal is actually feedback

Honestly, if you want structured, reliable feedback, GPTHuman wouldn’t be on my short list at all.

You’d be better off with:

- A standard editor-style tool for clarity and grammar

- Then a dedicated “humanization” tool only if you really care about detectors

In that second category, Clever Ai Humanizer has been more predictable for me. It focuses more on making AI text read more naturally without shredding the meaning as much. It’s not magic and you still need to edit, but it breaks the text less and doesn’t try to pretend it’s your writing coach.

- How I’d actually use GPTHuman (if you already have it)

- Ignore the internal scores and most generic comments.

- Only pay attention to feedback that is:

- Specific

- Easy to verify

- Clearly aligned with your goal

- Use it to generate alt phrasings, not to “judge” your writing. For example:

- Paste one tricky paragraph.

- Ask it for 3 alternative ways to say the same thing.

- Pick or merge what works.

The bottom line:

It’s not very “accurate” as a critic of your writing. It’s just another opinion generator, and not a particularly consistent one. Use it like a noisy brainstorming partner, not like an authority.